7 reasons why you shouldn't train LLMs with your own data

Published: March 13, 2026

Neural language models such as ChatGPT are currently being examined by many companies for their use with their own data. For specific requirements, it may be tempting to fine-tune these models with your own data, i.e. the language model is trained with the company's own data. However, especially when it comes to knowledge management, but also beyond that, this approach has considerable disadvantages.

These are the reasons

1. difficult maintenance of the data

Once integrated into the "black box" of a language model, it is almost impossible to change or correct data selectively. In contrast to traditional databases, where changes can be made directly and transparently, data in language models disappears and cannot be edited or updated individually.

Example: Imagine a bank that uses a language model to answer automated customer queries. The model has been trained with data about a specific fee. If the bank changes this fee in its systems and documents, the language model cannot simply be updated to reflect the new information. Procedures are needed that require sufficient knowledge of AI processes to "delete" the old information and train the new ones. Customers could therefore be misinformed, leading to confusion and possible financial consequences.

2. retraining for extensions and updates

A significant problem is the need to retrain the model each time data is added. This is not only time consuming and costly, but can also lead to inconsistencies in the training data if not all changes are taken into account at the same time. It will also be impossible to take into account volatile or constantly changing information.

Example: A pharmaceutical company uses a language model to answer questions about drugs. If a new research result is published that concerns the side effects of a drug, the entire model must be retrained to integrate this new finding. This process can take weeks, during which time patients may receive incomplete or outdated information.

3. hallucinations are difficult to control

Language models can have a tendency to "hallucinate" information, i.e. generate statements that are not based on the training data. This can lead to misinformation, which is particularly critical in a knowledge management system. Checking and verifying such misinformation is time-consuming and represents a considerable risk.

Example: A tourism company uses a language model to provide travel recommendations. A customer asks about safe destinations and the model "hallucinates" that a certain area is safe, even though there is currently political unrest. The customer could book a trip based on this false information and end up in danger.

4. lack of transparency

Another problem with fine-tuning language models is the lack of transparency about how decisions are made or information is generated. This is because the very nature of technology is the absolute inability to reconstruct exactly where the information came from. This is precisely what makes it almost impossible to use for important business or organizational decisions. If no information can be provided about the source of a statement, companies lose control over the information that is output - and are very likely to run into compliance issues.

Example: A mechanical engineering company uses a language model to automatically answer technical questions about its products. A customer asks for the specifications of a particular machine part because they are considering using it in their own production process. The language model provides an answer stating that this part can withstand a temperature of up to 500 degrees Celsius.

The customer, without knowing the exact source or context of this information, relies on this information and uses the part in a high-temperature process. It later turns out that the model has misinterpreted the information and the part is actually only designed for temperatures up to 400 degrees Celsius. This leads to a failure in the customer's production and potentially significant damage, both financially and in terms of safety.

The Perfect Solution for you

We look forward to a non-binding consultation and will be happy to work with you to determine which product provides the greatest value for your needs. Let’s make better decisions together, faster.

5. unpredictable costs

Fine-tuning models, especially large ones, requires significant computing power. This can lead to unexpected and increasing costs, especially if regular updates or retraining are required.

Extended example for an engineering company: A mechanical engineering company, let's call it "TechMach GmbH", wants to use a language model to answer customer queries regarding technical specifications, maintenance instructions and ordering information for its machine parts. Since TechMach GmbH has specific and unique products, they decide to fine-tune a pre-trained language model with their own company-specific data.

- Cost factor: data cleansing and preparation

Before they even start the actual training, TechMach GmbH has to sift through, cleanse and format their data. For this step, they hire a data scientist and two technicians who work together for a month. Assuming the data scientist earns 70€/hour and the technicians 50€/hour each, this results in a cost of around 40,000€ for this step alone (assuming a 40-hour week). - Cost factor: computing power and memory

Fine-tuning requires powerful GPUs or TPUs. Depending on the size of the model and the volume of data, this can quickly become expensive. Simple fine-tuning can cost several thousand euros. However, a more extensive project can easily run into the six-figure range. Let's assume that TechMach GmbH would estimate €50,000 in computing and storage costs for its project. -

Cost factor: skilled workers and time A team of two machine learning experts, each earning €80/hour, works for two months to train and optimize the model. That's another 128,000€.

- Cost factor : Tests and adjustments

Once the model has been trained, it needs to be tested and possibly readjusted. This cycle can be repeated several times. Assuming that this process takes another month and involves additional personnel (testers, engineers, etc.), this could cost another €50,000. - Cost factor : Unforeseen problems

As with any technical project, unexpected problems can arise, whether due to errors in the data, technical problems or difficulties integrating the model into the existing IT infrastructure. Such an unplanned effort could easily cost another €20,000 or more.

In total, the costs for fine-tuning in this example could therefore be over €240,000 for TechMach GmbH - and that is still a conservative estimate. It is clear that the costs for fine-tuning language models can rise quickly and therefore need to be planned and budgeted for carefully.

6. no role-rights principle can be implemented for language models

In many IT systems, especially those used for business purposes, the roles and rights principle is of crucial importance. It determines who can access which information and carry out which actions.

With language models, however, it is almost impossible to implement such differentiated access management. If certain information is integrated into the model, anyone who has access to the model can also retrieve this information.

It becomes even more problematic when you become aware of so-called "prompt injection attacks". Attackers can use cleverly formulated prompts to make the model reveal information or react in a certain, undesired way. This means that even if attempts have been made to establish certain roles and rights in the model through fine-tuning, these can be circumvented by such attacks.

7. no targeted search possible

While traditional database systems allow you to search for specific data or information, language models are based on probabilistic algorithms and provide answers based on patterns in the training data.

The lack of targeted search functionality can lead to inefficiencies, especially when fast and accurate answers are required. It can also increase the risk of misunderstandings and errors, as the model cannot always recognize the exact context or intent behind a query.

The alternative: RAG (Retrieval Augmented Generation)

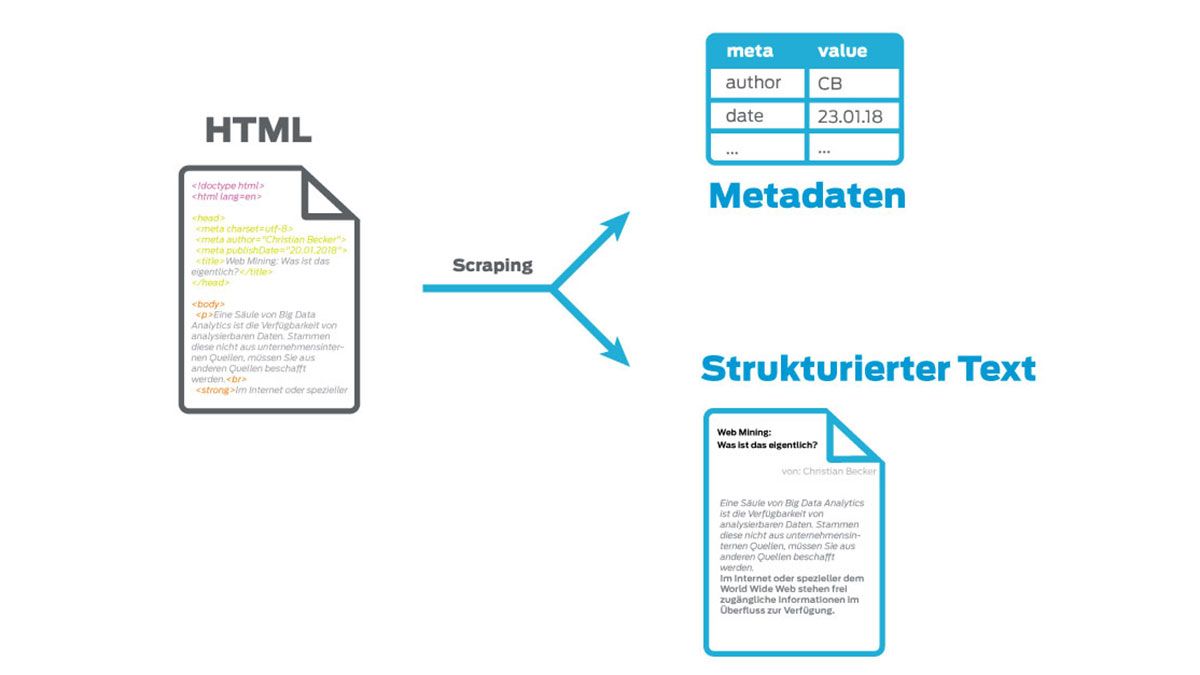

RAG (Retrieval Augmented Generation) is a novel method of information retrieval and generation that integrates both machine learning techniques and traditional search engine concepts. At its core, it combines the strengths of language models with those of information retrieval systems to provide accurate and relevant answers to user queries.

In contrast to fine-tuning, RAG offers a flexible and efficient solution for knowledge management tasks. RAG combines the advantages of language models and traditional retrieval systems. Instead of integrating knowledge directly into the model, RAG searches a database for relevant information and generates answers based on it.

The principle is quickly explained:

-

A query is received by a Q/A system (e.g. knowledge management system)

-

The Q/A system intelligently filters suitable content from the database. The more intelligent and better the search technologies and methods are, the better the subsequent response quality.

-

The content is converted into text form

-

(Optional) If required, the language model can be given a desired role using a custom prompt. (e.g. to specify tone, address and response length)

-

The text is transferred to a language model together with the custom prompt and the original input.

-

The language model generates an answer with the knowledge from the filtered content

-

For further questions, the context is retained; if the context changes, a new query is fired and started again at step 1

This allows you to talk to a language model as if it had read your data without ever having been trained on it.

RAG represents a revolutionary fusion of creative language generation and precise, targeted data retrieval. To realize the full potential of this system, let's take a closer look at its key benefits:

Direct and transparent updating of the data source: While traditional systems often require complex reconfigurations or even manual intervention to make data changes, RAG allows you to update data easily and directly. You could always access your content in real time, even if it has just been changed.

No need to retrain when data changes: Training large neural networks can be time consuming and expensive. With RAG, there is no need to retrain the entire system every time a small data change occurs. Instead, the underlying model is continuously updated and adapted through ongoing information retrieval.

Better control over the responses generated: While language models are often considered "black boxes" where the origin and context of answers are difficult to grasp, RAG provides a clear structure that allows users to understand and trace the origin of answers. When answers are generated, the document from which the content originates can always be named (and linked directly).

In addition, all the issues mentioned above that pose a problem for fine-tuning are also off the table. Not yet mentioned, but explicitly mentioned should be the great advantage of data security with this principle: it is not necessary to train a third-party hosted language model with your data. If you remove the database, the language model is no longer able to provide information. So you remain in control of your data.

Conclusion

All in all, RAG is not only a useful alternative for fine-tuning language models, but also superior in many applications. It is a technology that utilizes both the creative power of language models and the precision and reliability of knowledge management systems. The result? A state-of-the-art solution that may soon have a significant impact on the way we retrieve information.

The Perfect Solution for you

We look forward to a non-binding consultation and will be happy to work with you to determine which product provides the greatest value for your needs. Let’s make better decisions together, faster.