Unleashed LLMs despite token restrictions

Published: March 13, 2026

Due to the hype surrounding Large Language Models (LLM), companies are planning to implement chatbot-based interaction with the company's internal knowledge database. This new form of user interaction can certainly be seen as an innovative development in the handling of knowledge, even if it is not entirely free of risks and limitations. The powerful language models are restricted in their processing capacity by the token limit.

A token in the context of Large Language Models (LLMs) is a basic unit used to divide text into smaller sections or words so that the computer can process them better. These units are usually very small and can be words, letters or even parts of words, depending on how a model is trained.

To get a feel for how many tokens a text contains, you can use a token calculator: https://platform.openai.com/tokenizer

Example:

In this example you can see that the 443 characters/49 words from a short bio are effectively 148 tokens for GPT-3

Now that we know how many tokens the text input has, we can look at the token limits of some language models (as of August 2023. 32k tokens for GPT4 are also only available via the API):

The limitation is the reason why you cannot simply pass the entire knowledge base as context to a language model (apart from the fact that this would cause extreme costs as LLM providers charge per token). Even Claude 2 (only available in the UK and US) with its comparatively impressive 100k token limit would already drop out with a (sic!) larger manual, not to mention searching across many documents.

This happens when the input prompt was too long:

How can a language model effectively crawl large documents while maintaining model performance? The solution lies in applying a variety of innovative strategies and technologies.

Mastering the challenges of the token limit

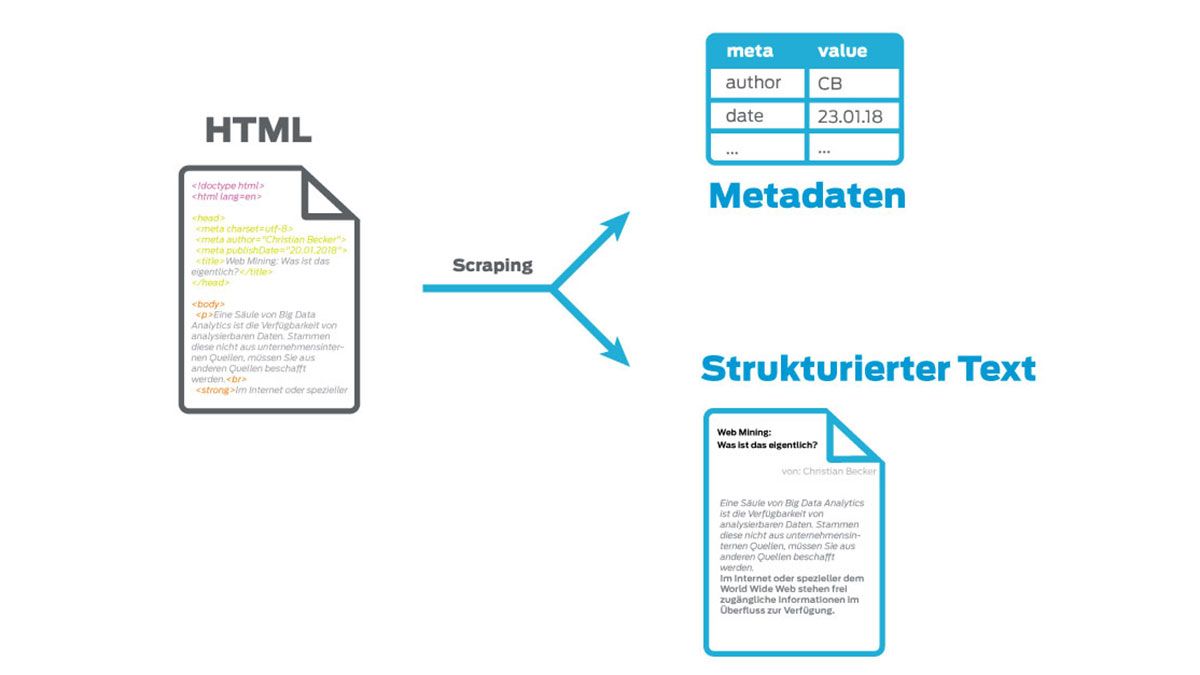

The first step does not yet require a language model, but a proven and mature approach, as is already used in knowledge management today: an intelligent search first receives the user's query and filters out only relevant content from the knowledge base before passing it to a language model as an input prompt. What sounds trivial already involves a great deal of (AI) technology and providers have been continuously optimizing their technology for years to accomplish this task. The principle of this interaction is called Retrieval Augmented Generation (RAG).

RAG lays the foundation for accessing your own knowledge base with language models. This does not directly eliminate the token limit problem, but you can build on this foundation and use other strategies to minimize the symptoms.

Chunking

In the context of creating LLM-related applications, chunking is the process of breaking up large chunks of text into smaller segments. It is an essential technique that helps to optimize the relevance of the content we get from a vector database once we use the LLM to embed content.

The benefit of chunking is to ensure that content is embedded with as little noise as possible, but is still semantically relevant.

For example, semantic search indexes a corpus of documents, with each document containing valuable information on a particular topic. Applying an effective chunking strategy ensures that the search results accurately capture the essence of the user query. If the chunks are too small or too large, this can lead to inaccurate search results or missed opportunities to display relevant content. As a rule of thumb, if the block of text makes sense to a human without the surrounding context, it will also make sense to the language model. Therefore, it is crucial to find the optimal block size for the documents in the corpus to ensure that the search results are accurate and relevant.

Sometimes semantically related content can exist across multiple sections or even across documents. For example, a user requests the procedure for anchoring a construction crane, but the tools required for the work steps are not directly described in the same paragraph.

It becomes more critical when safety instructions need to be taken into account. Several variables therefore play a role in determining the best chunking strategy, and these vary depending on the application and the data basis. But they also depend on the embedding models used and, of course, the token limit scope.

From a technical point of view, splitting content is fairly simple in most cases. It is advisable to search for the termscontent-aware chunking or fixed-size chunking. The difficulty lies in the fact that there is no universal solution for chunking and the optimization must ultimately fit the use cases.

Embedding

Why do search engines find what you are looking for so quickly? Why do recommendation systems "know" exactly which product or movie you might like? The answer: vector embeddings!

What are vector embeddings?

Vector embeddings help us to organize things so that similar things are close to each other in a graph.

In a vector space for embeddings, as used in natural language processing or other machine learning applications, the axes do not represent specific, named properties such as "weight" or "sweetness", as would be the case with concrete, physical objects. Instead, each axis represents an abstract dimension that has been learned by training the model.

How does this work?

To achieve this, each object (for example, an apple) is used in a list of numbers (a "vector"). This list describes what the object is like. For example, the list for an apple could look like this: [red, round, sweet]. These lists help computers to understand and organize things.

Vectors are an ideal data structure for machine learning algorithms. Modern CPUs and GPUs are optimized to perform the mathematical operations required to process them. But the data is rarely represented as vectors. This is where vector embedding comes into play. With this technique, we can represent virtually any type of data as vectors.

The aim is to ensure that tasks can be performed on this transformed data without losing the original meaning of the data. For example, if you want to compare two sentences, you should not only compare the words they contain, but also whether or not they mean the same thing. To preserve the meaning of the data, you need to understand how to create vectors where relationships between the vectors make sense.

This requires embedding. Many modern embeddings are created by passing a large amount of labeled data to a neural network. Ultimately, it can predict what an output label should look like for a particular input - even if it has never seen that particular input before.

Words that appear close together are semantically similar, while words that are far apart have different semantic meanings.

After training, an embedding model can convert the raw data into vector embeddings. This means that it knows where to place new data points in the vector space.

Each time a user asks a question, their question is also converted into a vector and the vectors of our document parts that are closest to the question vector are determined using cosine distance. We search for the most suitable vectors that are likely to contain information on the topic.

Embedding converts text elements into vectors that represent the semantic similarity between different text elements. This technique is essential for quickly and precisely identifying relevant information in large documents and thus increasing the efficiency of information retrieval.

Food for thought for the future: pre-processing information

Until now, documents have essentially been written by people for people. Over time, a wide variety of formats have become established for the different purposes for which these documents are used. For example, many documents have a title, a table of contents and page numbers because they make it easier for people to read and understand them.

The question you have to ask yourself: If more and more content is created not only for human readers but also for artificial intelligence, does it need to be created according to the same principles?

When has the critical mass of content processing by language models been reached so that the topic of content creation needs to be rethought? At least if various processes such as text summarization, keyword extraction and the methods mentioned above are used anyway, a lean information approach as a pre-processing stage could extremely shorten the document length without losing content.

Ironically, language models can do this very well. And it doesn't have to happen live, but can run "cold" in the background when the content is not being queried. Every time a document is uploaded, a lean twin is created that is only used when a language model is queried. Admittedly, this approach is still the subject of further research, but the idea is funny: for a long time, people have been thinking about how to make content more accessible to machines. Perhaps it will soon be the other way around.

One of the founders of OpenAI said: "Prediction is compression."

Conclusion

Although the token limit of the language models varies, it will in any case nullify any use in the context of knowledge management if other approaches and methods are not explored. Or work with providers who offer everything from a single source.

The Perfect Solution for you

We look forward to a non-binding consultation and will be happy to work with you to determine which product provides the greatest value for your needs. Let’s make better decisions together, faster.